Essentially, Bayesian reasoning balances what we already know with what we have just discovered.

Reasoning and informed decisions rely on accurate assumptions. However, there is a paradox: humans favor stories over statistics. Although the story telling is well appreciated, it often undermines rational choice. To decide better, we must recognize these cognitive traps and master the art of Bayesian reasoning.

The Story

I began my career as a manufacturing engineering intern before transitioning to the software industry. I was obsessed with understanding why systems and structures fail. My focus shifted from simple maintenance to a deeper inquiry: How do we protect these systems, and by what methods can we make them better?

During a meeting in Bursa, Turkey’s industrial hub, my focus remained on the same questions. My customer, a crane manufacturer, looked for a way to validate designs under real-world conditions as early as possible. Computer simulations, specifically Finite Element Analysis (FEA), offer this by allowing engineers to test virtual prototypes. The company wanted to ensure a right investment, but they were also considering another brand. One engineer, relying on her past experience, strongly advocated for it despite our competitor’s price being four times higher.

I sensed resistance. Based on our discussion, I discovered her FEA experience was elementary; she lacked professional depth and struggled with key modeling concepts. Despite providing rigorous scientific reports and benchmarks proving our solution’s validity, their position remained unchanged. Her personal testimony outweighed both statistical data and obvious financial advantages. At the time, this irrational preference surprised me. Only later did I find the explanation: Bayesian Theory.

Finite Element Analysis (FEA) is a numerical method used to predict how structures behave under physical forces. By dividing a complex model into a mesh of smaller ‘cells’ or elements, the system calculates values at each node to determine the overall structural integrity. Supported by commercial softwares, FEA is an essential tool in many industries especially like aerospace, manufacturing, and construction.

People Believe Stories

People are wired for stories, not just fairy tales, but narrative-driven information. We instinctively trust storytelling over raw data. This is why public speakers are often advised to use stories; they are the most effective tool for human engagement.

“Those who tell the stories rule the world.”

Native American Proverb

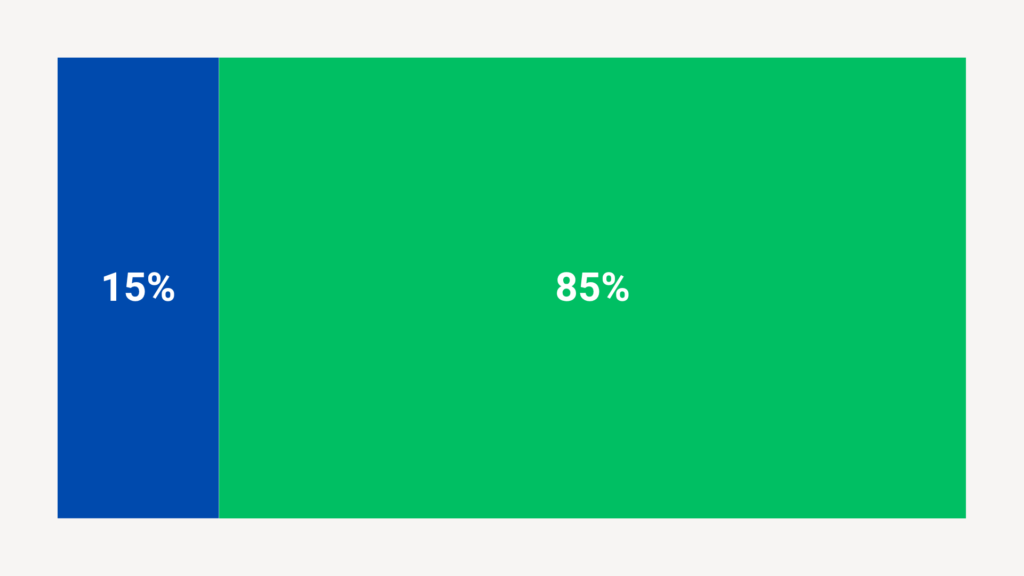

In his book, Thinking Fast and Slow, Daniel Kahneman shares a case regarding blue taxis and green taxis. There is a city which contains 15% blue taxis and 85% green taxis. A taxi was involved in an accident and witnesses claimed that the taxi was a blue taxi. What do you think?

In Thinking, Fast and Slow, Daniel Kahneman presents a classic puzzle: A city has two taxi companies—85% of the cabs are Green and 15% are Blue. After an accident, a witness identifies the vehicle as Blue. Given these facts, how likely is it that the taxi involved was actually Blue?

Most people instinctively trust the witness and conclude the taxi was Blue. However, Bayesian Theory challenges this intuition. As Jonathan St. B. T. Evans explains in Thinking and Reasoning, Bayesian Theory, together with subjective probability and utility, and incorporating both probability theory and decision theory has developed a mathematical and philosophical system.

The theorem dictates that our confidence in a hypothesis must reflect two factors: our initial belief and the strength of the new evidence. Essentially, Bayesian reasoning balances what we already know with what we have just discovered.

Bayesian Theory

P(A | B) (Posterior): The updated probability of the hypothesis after accounting for new evidence.

P(B | A) (Likelihood): The probability of observing the evidence, assuming the hypothesis is true.

P(A) (Prior): The initial probability of the hypothesis before considering new data.

P(B) (Evidence): The total probability of observing the evidence under all possible conditions.

“We are not Bayesian by nature… we are prone to ignore the base rate in favor of the specific evidence.”

Daniel Kahneman

Let’s go back to the taxi case and include values from the case to the formula.

To apply the formula to the taxi case, we define our variables as follows:

P(A | B): The probability the taxi is actually Blue, given the witness identifies it as Blue. This is the value we seek to calculate.

P(B | A): The likelihood the witness identifies a taxi as Blue when it is actually Blue. Since witnesses are 80% accurate, this value is 0.80.

P(A) (The Prior): The base probability of a Blue taxi involvement regardless of testimony. Given the city’s statistics, this value is 0.15.

P(B) (Evidence): The total probability that a witness reports a Blue taxi. This includes both correct identifications and false alarms.

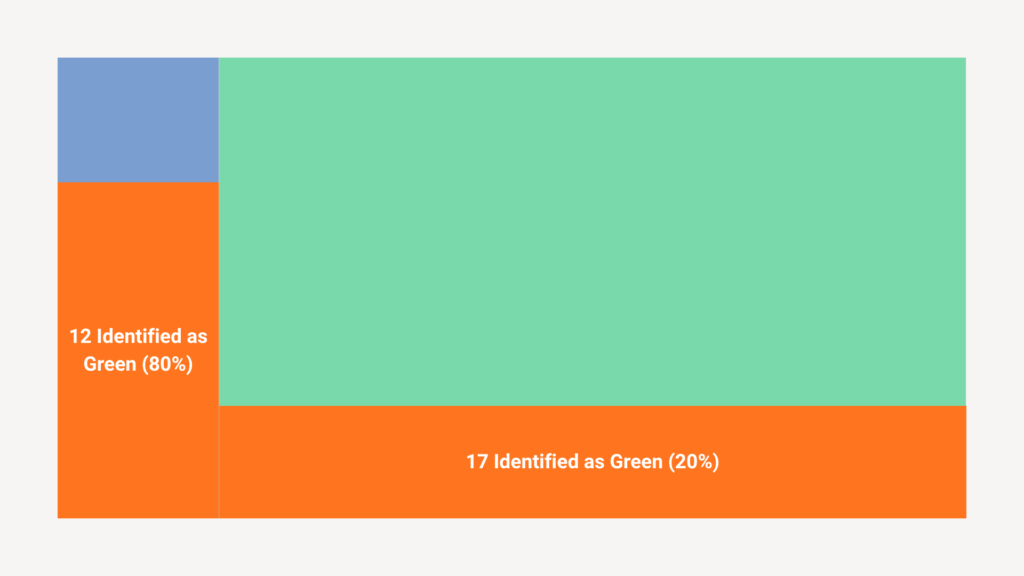

To find P(B), we must sum two distinct scenarios:

- Taxi is blue and witness correct = 0,15 x 0,80 = 0,12.

- Taxi is green and witness wrong = 0,85 x 0,20 = 0,17.

Let’s put these values to formula:

The result is 0,4137. That is mean 41%.

Bayesian Reasoning tells us this fact “Human Brain keen neglect base statistics and overvalue stories. If a case happens rarely, the signal regarding the case is most probably a wrong alarm.”

The 0.80 value originates from a controlled court test. In this study, the court evaluated the reliability of witness testimony and found that witnesses correctly identified colors 80% of the time.

Case Study: HR Interview Process

The Scenario

A high-growth tech firm was hiring for a Senior Software Architect. The company was looking for a candidate who has extensive experience in Cloud Architecture, specifically AWS and Azure. This role was part of their transformation project and modernized the architecture.

Interview Process

The process contained three stages of interviews. After the application window closed, convenient candidates called for the first round of interview. The company received 60 applications. Their ATR system eliminated 40 of applications then HR representatives eliminated 5 more by checking cover letters and CVs. These 15 candidates called for an interview.

Case

A high profile candidate passed the ATR system and he had extensive experience and background from Amazon and Google as Software Architecture. However, shortly after first round interviews he received a negative email regarding his application. He and his friends were very surprised because according to industry standards and experience, he was part of the top 3%. How would that happen?

The company hired someone else, who has a moderate background but a good profile marketer. Unfortunately, the transformation initiative failed because the architecture renovation project took more time than expected. The person left the company after 18 months and now HR is looking for candidates for the same role with a catastrophic project.

Let’s apply Bayesian Reasoning here:

The Prior P(H): He has a solid background—former Lead Engineer at Google, contributor to major open-source projects, and a Master from UCLA. Statistically, the probability that this person is a “High-Performer” based on his track record is very high (let’s say 85%).

The Evidence P(E):

During the interview, the following happens:

- The candidate joined the Zoom call 3 minutes late.

- He looked slightly nervous and was wearing a faded band T-shirt.

- He stumbled slightly on the first “ice-breaker” question due to apparent nervousness.

- He forgot to ask end of interview questions like:

- What is the timeline?

- What does the process look like?

- Do you have any hesitation about my profile regarding the fit?

The Intuitive HR Decision

The HR Manager immediately feels a “gut instinct” (Intuition). She thought: “If he is late for the interview, he will be late for deadlines. If he can’t dress professionally, he won’t fit our corporate culture. He is clearly not as confident as his CV suggests. Outcome: Reject.“

Prior P(H) = 0.85: The baseline probability of high-level competence, based on a decade of proven success.

Likelihood P(E | H) = 0.30: The probability that a brilliant, overworked engineer might experience a technical glitch or disregard social norms.

False Positive P(E | ¬ H) = 0.70: The probability that a low-performing candidate exhibits the same behavior (e.g., being late or unresponsive).

To determine the final probability, we first calculate the total probability of the evidence P(E).

This result, 0.36, represents the total likelihood of observing the specific behavior (the “evidence”) across all scenarios.

Despite the ‘bad’ evidence of being late, the probability of him being a top-tier talent remains remarkably high—exceeding 70%. The HR representative fell into a common cognitive trap: Base Rate Neglect. By focusing solely on a single negative event, they ignored the overwhelming weight of ten years of proven success.

Key Takeaways

- Prioritize Statistics Over Stories: The human brain instinctively favors stories, but professional decisions require reviewing the base rates.

- Avoid the WYSIATI Trap: Never assume “What You See Is All There Is.” Seek the hidden, massive data that exists beyond your immediate observation.

- Apply Bayesian Updating: Treat every new piece of information as a data point to update probabilities, not as a reason to discard years of proven success.

- Distinguish Signal from Noise: In rare cases, negative signals (like a single instance of being late) are often false alarms.

- Balance Intuition with Logic: Use storytelling to engage, but use Bayesian reasoning to decide.

References

Jonathan St B. T. Evans (2017). Thinking and Reasoning, A Very Short Introduction. 1st Edition. Oxford University Press.

Daniel Kahneman (2011). Thinking, Fast and Slow. 1st Edition. Penguin Press.

Disclaimer: The views and opinions expressed in this article are solely my own and do not reflect the official policy or position of any past, present, or future employer or affiliated organisation. This content is intended for informational and educational purposes only and does not constitute professional advice.

Leave a Reply